The uncanny world of robots at home

Re-thinking robotic design part 1: social support robots diving us into the uncanny valley. We don't need robots to look like humans.

Am I the only one here who has never wanted a robot to raise my kids? Am I the only one that thinks there could be more harm if it looks human?

The human brain is wired around faces: they’re the center of how we see emotions which causes us to see them in everyday objects. Now, we are re-wiring our brains with our phones without a real plan regarding the long term effects. What happens when we give our phones a face — the feedback loop will be even more amplified.

This article considers the effects of robot design as an emotional tool and why we are not prepared at all for the repercussions. It’s a tour of the uncanny valley, robotic style, robotic examples, and where we can start.

The Uncanny Valley

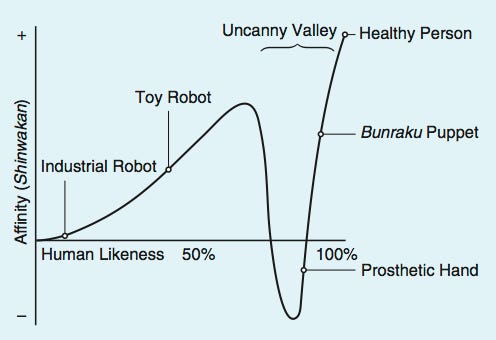

Its original name was bukimi no tani genshō - which from Japanese roughly translates to Valley of Eeriness. The Uncanny Valley is the drop in human likeness for robots on the path from unlike humans to nearly indiscernible from us — the likeness drops when the robots are in between awkward and perfect (uncanniness is a familiar eeriness).

Japanese roboticist Masahiro Mori is credited with the term. It does seem like quite a Japanese concept: requires observation, introspective, human, artistic, etc. I like Japan, but this is an aside. A visualization of it is below [source]. From bottom to top is how much a human will enjoy spending time with the robot, and from left to right is how human the robot is.

Starting 2020, we are at the point where robots (more later on some examples):

a) are good at some tasks and way better than humans at others,

b) can be made to look eerily human — when stationary (ie by their construction), not in movement, which still is jerky and preliminary.

How does this work?

I found this summary of why the uncanny valley appears useful:

Mori's original hypothesis states that as the appearance of a robot is made more human, some observers' emotional response to the robot becomes increasingly positive and empathetic, until it reaches a point beyond which the response quickly becomes strong revulsion. However, as the robot's appearance continues to become less distinguishable from a human being, the emotional response becomes positive once again and approaches human-to-human empathy levels.

This area of repulsive response aroused by a robot with appearance and motion between a "barely human" and "fully human" entity is the uncanny valley. The name captures the idea that an almost human-looking robot seems overly "strange" to some human beings, produces a feeling of uncanniness, and thus fails to evoke the empathic response required for productive human–robot interaction.

The last sentence is the crucial one: a robot “fails to evoke the empathic response required for productive human-robot interaction.” To me there are two key phrases: a) evocation of an emphatic response and b) an indication of productive human-robot interaction. This leads me to the questions: if making robots look human causes failed interactions, why make them look human at all? Do we have no risk if they’re never made to look human? I think it is crucial to consider, and expect the answer to be that a near majority of robots do not need to look human. The categories that benefit from humanness, like medical and social robots need an increased level of scrutiny because those applications fall on a knife’s edge of risk.

Below is a fun experiment where they measured reactions to a bunch of robots that people rated on “mechano-humanness score.” The findings match the theory pretty well, except they may be forcing a cubic function to the data, and there are impressively few points in the actual uncanny valley — we’ll have to keep an eye on this type of data over time.

[Source: Mathur, Maya B.; Reichling, David B. (2016). "Navigating a social world with robot partners: a quantitative cartography of the Uncanny Valley". Cognition. 146: 22–32. doi:10.1016/j.cognition.2015.09.008. PMID26402646.]

Robotic style & avoiding the uncanny

What if we don’t need our robots to be purely human. We can focus on making them quirky (a friendly way of making things engaging), and matching the personality with the task can improve functionality. Let’s look at some designs and how they fall on the uncanny valley (or if they’re off it) and what directions the design pushes it.

Writer’s note - this half of the article was challenging to write. It feels like the idea of having a human face on the robot is being pulled up by how accessible the design is and pulled down by a dark cloud of potential downside. The upside of technology is readily apparent and constantly unfolding, so I try to analyze the risk in a measured way. Sometimes I think I do miss it; please comment if you have additions. I think my bias for and against certain robots may actually track the uncanny.

Starting bots

Starting historically, one of the first humanoid robots was Asimo from Honda in 2000 (the age of the webpage matches the age of the robot, still). This robot was a breakthrough in terms of mechanical hardware and use but had little risk in uncanniness. This is sort of a baseline.

Now, one of my favorites is a modern take on a social robot — and it nails it. See Paro, the supportive stuff seal. Gently noises, a soft construction, and pleasant to the eye — it’s like a puppy if you cross your eyes far enough. (Look at this headline about it: “Cuddling Robot Baby Seal Paro Proven to Make Life Less Painful,” what more do robots need to solve). I honestly think simple social support robots will get worse from here: this one nails it as a gentle buddy that tries to nudge you to be better but isn’t a therapist solving all your problems. Making the move from a seal to a human support robot will be tough on the user.

Recent bots

There were two articles that caught my eye in the first half of 2020, with tweet embeddings to add photos that give context.

An expose on retail jobs, robots, and how companies are making robots liked. [NYT]

This is really more of the same news cycle during COVID. Robots replacing humans where-ever they can. That’ll be continuing for a few years, but why do you need to put a face on a speaker reminding people to be socially distance or a manipulator moving objects on an assembly line? Familiarity and assumptions on design.

Pandemic playmates for kids at home. [WSJ]

This one is really creepy. It also came out that this company may have been collecting data on children (no extremely reputable sources, disclosure). Just click the link above and watch a bit of it from the point of view of an innocent child trying to learn from, emulate, and attach to this thing (price point $1499). Another theme I want to touch is: why do so many robotics companies seem to miss the mark?

Really, I have to ask in the context of 2020: what makes a good AI? There’s so much going on in the world, this is the bare minimum.

Functionality — it needs to work and do something of value.

Performance — it needs to be able to do what you want to do without many barriers. This is the “how well does it work” category.

Character — people have an affinity to robots because they’re fun.

Safety — what are the short and long term consequences, or an idea of how to monitor them.

Navigating the valley

What we have learned so far is that designing your AI to be human has some risks. There are downstream discussions about making your AI "human-like" in other things than appearance, such as ability. A podcast stuck with me at one point made the argument (roughly, not a direct quote):

What if the mediocre online chat tools of today played a facade as an English second-language employee from Asia? Would we be less critical of its mistakes?

The answer is most likely yes, from a different flavor of sociological differences, but I think this is an important point in robot design: character, how well they work, and what they do are all at play. Let me make the point here of two different chatbots that may be more or less useful for a customer:

The near-perfect Google AI:

Character: this ceases to exist, this AI acts like a computer but still adds fake human noises like uhm and laughs, therein becoming rapidly eerie.

Function: answers basic questions about your product or day.

Performance: near-perfect, and improving by the day.

The far-off, quirky, shy chatbot:

Character: this robot has some personality that lets it own up to its mistakes and maybe have things you may want to discover about it.

Function: answers basic questions about your product or day.

Performance: maybe intentionally, slightly behind. Developing it may make it less usable, who knows.

I would rather work with the second bot — it’ll be like the slow internet versus no internet conundrum: slow internet is worse. The quirky chatbot is moving one direction on the uncanny valley chart, but I can’t tell which direction. Is it more or less human to have character? Would we detect false character?

This dilemma — which direction is technology moving in the uncanny valley — is why I added the disclaimer (writer’s note) above, it seemed like adding humanness to the robot enhanced its likeness. Chatbots could just be behind on the uncanny valley (as Google continues to perfect it, it’ll start getting more likable again).

The case for Clippy (yes, I know it was really a failure)

Now let’s consider a famous AI that got some hate — Microsoft Office’s help tool: Clippy the Paperclip.

Character: through the roof. Almost too much — Clippy will interrupt you, and know it is doing so. The design is openly strange, which in some ways makes it more accessible.

Functionality: it is a general-purpose Microsoft help search bar.

Performance: not great, but it was the first of its kind.

Safety: TBD, you couldn’t turn it off.

If this was a human aide in Microsoft office 20 years ago, it would’ve had a different course. It would’ve been competing with call centers and other forms of help, but by being unique it has the benefit of being judged on a fresh slate. I consider Clippy a success because it opened up new territory, was memorable, occasionally useful, and didn’t serve to disadvantage any individuals or groups long term.

Obviously, it’s performance and aggressiveness limited Clippy, but it was a great character. I would love to see more companies release something memorable and safe.

Looking back a few years to see where the AIs of the world were, it’s pretty clear we’re in the first peak of the uncanny valley, and I’m not sure if society is ready for the fall. Robots are getting more human, people are getting more addicted to their phones, and soon we will contend with the mixture of both of these. Another conversation that fights my views on this topic can be found here (the last hour), which suggests that making robots human is worth it because that could make everything we do more meaningful.

I will continue this story of re-thinking robotics in talking about how the mathematical model behind most robots may be limiting their integration into homes.

Resources in the area

Podcasts

Lex Fridman with Kate Darling on Social Robots: This is a higher-level discussion including robot ethics, how humans should treat robots, the problems with personal robots, and deeper concepts like consciousness and mortality from the frame of a roboticist.

Lex Fridman with Rosalind Picard on Emotion in Robots: This is primarily on research regarding making any progress on AI for emotion recognition and assistance. Thankfully, the podcast spends a lot of time on the potential ethical concerns of this field of work (people trying to read emotions for advertising and other deleterious actors).

These two podcasts make the case (long-form podcast, so somewhat round-about in getting there) for a) why dealing with emotions in robotics is hard and b) why the robots we make may be self-limiting.

Movies

Big Hero 6: A good pep talk movie for experts or aspiring roboticists — just a fun story about what is good about making robots. Baymax looking gently human works.

Ex Machina: A good reminder of why we need to be careful in this area. People will make robots that people can fall in love with, and I don’t think there’s a point giving people another so troubling vice.

Articles

Researchers designed a robot face to avoid the uncanny valley, and it is hideous. Just don’t make a human face?

Trying to make robots that are so human-like that we don’t notice they are robots. What could go wrong?

Hopefully, you find some of this interesting and fun. You can find more about the author here. Tweet at me @natolambert. I write to learn and converse. Forwarded this? Subscribe here.