Digital companies and the goal of at-home embodied AI

Learning how to learn, by letting autonomous agents interact with the world. Why big tech companies like Facebook and Google hire roboticists to bring life to their excess meeting rooms.

You’re wandering through the brother’s other tech office, and you see a moderately sized meeting room filled with robots. This is not what you expect when you visit a friend to try out the new micro-cafe in the office of their ad-tech startup (well, when visitors were allowed), but it is increasingly becoming a reality.

Large technology companies invest large amounts of money in exploratory research ($10-$20billion per year among the biggest players) and hold onto even more money for a rainy day (Apple, Microsoft, Google, Amazon, and Facebook jointly have $550billion in cash). In terms of billions, hiring a couple of robotics researchers is pennies in a sea. It also collects more top talent under their roof, but I think that helps shorter term than I am considering.

The outlook for robotics as an industry is nearly-exponential impact in the next decade (or sooner, pending pandemic continuation as I wrote about in detail). The portion of this that technology companies are looking to capture is those robots embodying their algorithms in your homes. As I wrote about in Recommendations are a Game and Automated,

A general theme of recommender systems and these platforms is: if we can predict what the user wants to do, then we can make that feature into our system.

Extend this to agents that take up space in your life and home, and the problem compounds: there is no escaping devices by turning off your data or closing your app if it can come up and ask how you are doing.

I am a big proponent of these technologies, but the framing of (one important reason) why the large companies are investing heavily in the area is important. There are many, many more huge gains that autonomous agents can have in homes — including safety for at-risk populations living alone, helping with chores, interfacing with your digital life (email) in conversation, and more. We need to make sure we build this right.

Embodied AI

Giving autonomous agents the ability to interact with the physical world.

I think this a more nuanced topic than only translating state-of-the-art approaches in artificial intelligence and machine learning — learning with physical interaction is the first type of learning we experience. Children learn what new objects are by touching them and how they interact with their budding lives.

The tasks of trying to learn in hardware has different constraints: samples are more valuable (we cannot run hardware forever), the results are interpretable visually (connecting well with how humans operate), and more proofs that the learning is possible to exist. We are trying to mimic nature because it works, but there is no reason that embodied-machine intelligence cannot far surpass how we humans learn.

Building AI systems that are designed for hardware tunes the objectives and methods, creating a feedback loop to more digital AI methods that we interact with every day, like computer vision and natural language processing. I will continue to open up this box of how learning robots will synergize with traditional machine learning research.

So, I want to expand the definition of embodied AI: learning how to learn, by letting autonomous agents have new ways of interacting with the world.

To wrap up - learn about Facebook’s robotics efforts, Facebook’s in-home exploration push (Habitat), Microsoft’s public research websites, Apple’s job postings, or Google Brain’s Robotics team. Amazon’s goals in the matter are much more direct (logistics, delivery, taking over more industries, etc).

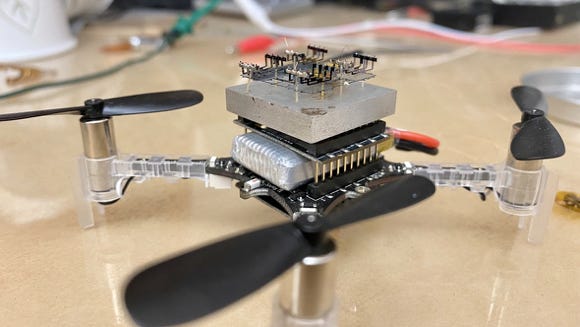

I am working to figure out how to safely have quadrotors (above) learn to fly in people’s homes.

Lay of the land for at-home robots

I am planning out a four-part series on what is limiting at home robots and where people will be going wrong. This is the where, when, and how of embodied AIs.

The uncanny world of at-home robots: I need to address why it is so crazy that some robotics companies give their toys human faces. Also, I ask: why are support robots targeted for the most vulnerable targets — children and the elderly?

Don't restrict your robot to your worldview: How can we change how we train and build robots to make them more broadly useful?

Giving the algorithm the keys: What is limiting Alexa et. al from being very useful and taking over-scheduling, routine purchasing, and more? There are more than just privacy concerns.

When personal robots don't suck: Summarizing my thoughts on the area and looking forward to where I see promise.

Please reply to this email, comment, Tweet, or carrier pigeon if you have thoughts and want to be part of this series.

The tangentially related

I don’t claim these are the best resources for following any one area. I can promise you’ll get good value per time in them, and I will always bring up robotics news when it happens. Some of maths, human feats, human optimization, technology, and more.

Classics in AI

The best of old papers of AI, mathematics, and more. The Anatomy of a Large-Scale Hypertextual Web Search Engine – Google Research. The paper from two Stanford graduate students Larry Page and Sergey Brin laying the groundwork for Google.

Quick Reads

Me when I am ready to write my dissertation. Open to applications for co-inhabiters.

A great follow up after the last two posts on recommendations and apps. The best resource I have been pointed to actually telling the story of TikTok and it’s algorithm.

Another example [WSJ] of weird at home robots that got be back writing on this theme in the next few weeks. Raising your kids with robots will have consequences when they realize the robot doesn’t have the capacity to care.

Books

The Botany of Desire - Michael Pollan: If you appreciate nature, gardening, or plants you’ll love this book. Re-frames some assumed notions of human societal development in a plants-eye-view — how plants influenced our decisions and progress.

Algorithms of Oppression - Safiya Umoja Noble: One of the better anti-racist technology books I have read. Goes explicitly into examples of Googles results and suggested searches being blatantly tone-deaf.

I am listening to (or watching)

Peter Attia Drive - Lori Gottlieb: Understanding pain, therapeutic breakthroughs, and keys to enduring emotional health. I don’t think these topics get discussed enough professionally nor personally.

Sam Harris with Kathryn Paige Harden: Two scientists discussing differing views and how mainstream media can amplify false impressions. Always good to see people sitting down and discussing issues that can accrue mainstream controversy (genetics).

Hopefully, you find some of this interesting and fun. You can find more about the author here. Tweet at me @natolambert. I write to learn and converse. Forwarded this? Subscribe here.